Proxmox Storage 101 – Figuring Out Where All Your Stuff Actually Lives

So you’ve got Proxmox installed, you spun up a VM, and everything is shiny and new.

And then at some point you think:

“Uh… where is this actually stored? And what happens if this drive dies?”

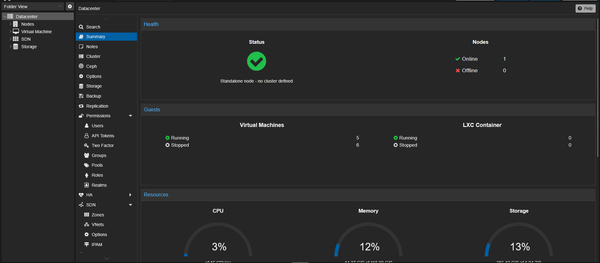

I had the exact same moment the first time I clicked around Datacenter → Storage and saw stuff like local, local-lvm, zfspool, directory, NFS, whatever. It felt like Proxmox was speaking a slightly different dialect of “Linux storage” than I was used to.

This post is my attempt to explain Proxmox storage in plain language, the way I wish someone had explained it to me.

By the end, you should have a decent mental model of:

- How Proxmox thinks about storage

- What to use for VM disks vs backups vs ISOs

- How to add a new disk and actually make Proxmox use it

No magic, just wiring.

1. How Proxmox Thinks About Storage (The Simple Version)

Proxmox doesn’t just say “this disk” or “that disk.” It thinks in terms of storage definitions.

A storage in Proxmox is just a named location where it can put stuff, like:

- VM disks

- Container disks

- ISO images

- Backups

- Templates

- Little snippets / cloud-init configs

Each “storage” can sit on top of:

- A physical disk (or partition)

- A directory (like

/mnt/bigdisk) - A ZFS pool

- An LVM/LVM-Thin volume group

- A network share (NFS, CIFS/SMB)

- Fancy things like iSCSI or shared storage in clusters

You can see them all under Datacenter → Storage.

So the flow is basically:

Physical disk → formatted / put into ZFS/LVM/etc → Proxmox sees that as a named storage → you assign that storage to VMs/containers/backups.

If you remember nothing else from this post, remember this:

Storage = a label in Proxmox for “this is where your stuff goes.”

2. The Storage Types You’ll Actually Care About

There are a bunch of options, but as a home self-hoster you’ll probably touch these first:

- Directory

- LVM / LVM-Thin

- ZFS

- NFS / CIFS

Let’s go through them quickly.

2.1 Directory Storage (the “Just a Folder” Option)

This one is the easiest mentally.

A directory storage is literally just a folder on a filesystem, like:

/var/lib/vz(the default)/mnt/bigdrive/mnt/nas/proxmox

You tell Proxmox: “Hey, this folder exists and you’re allowed to put [backups / ISOs / etc.] in here.”

Good for:

- ISO images

- Backups

- Templates

- Sometimes VM disks (especially for testing or if you really want them as regular files)

Pros:

- Dead simple

- Works on pretty much anything (ext4, XFS, etc.)

- Easy to see and manage from the CLI

Cons:

- No fancy thin provisioning / snapshots by itself

- Depends on whatever filesystem is under it

On a fresh Proxmox install, local is usually a directory storage.

2.2 LVM & LVM-Thin

LVM is the classic Linux Logical Volume Manager.

Proxmox uses it a lot under the hood.

- LVM:

- Good performance

- Carve a disk into logical volumes

- Not super flexible with snapshots in the Proxmox GUI

- LVM-Thin:

- Same idea, but with a thin pool

- Supports thin provisioning (VM disk says 100 GB but only uses what’s actually written)

- Plays nicely with Proxmox snapshots and clones

On many installs, local-lvm is an LVM-Thin storage and is where your VM disks go by default.

If you want “just works” and don’t care about ZFS yet, LVM-Thin is totally fine.

2.3 ZFS (The Fancy Nerd Favorite)

ZFS is like a filesystem + volume manager rolled into one. Proxmox supports it natively, and you even get an option to install Proxmox on ZFS.

Why people are obsessed with ZFS:

- Built-in checksums (catches silent data corruption)

- Very nice snapshots & clones

- Transparent compression

- Easy mirroring and RAID-like options (mirror, RAIDZ1/2/3)

Typical uses in a homelab:

- A ZFS pool for storing VM disks and containers

- A ZFS pool for general “NAS-like” data

Downsides:

- Likes RAM. It can run on 4 GB, but if you’re already tight on memory with VMs, you’ll feel it.

- A bit more to learn. Not hard, just more knobs.

If you’re the kind of person building a Proxmox box “for fun,” you’ll probably end up playing with ZFS at some point.

2.4 NFS & CIFS/SMB (Network Stuff)

These two let Proxmox use storage that lives on another machine, like your NAS.

- NFS: Common on NAS / Linux servers. Plays really nicely with Proxmox.

- CIFS/SMB: The Windows share world (

\\NAS\sharetype stuff).

Common pattern in homelabs:

- Local SSD / disk for VM disks

- NAS (NFS share) for backups + ISOs

You can run VMs directly from NFS if you’ve got a decent network and NAS. I would not bother with VM disks over SMB unless you have a really good reason and know what you’re doing.

3. What Goes Where? A Simple Mental Model

If you’re unsure where to put what, here’s an easy rule set.

VM & container root disks:

- ✅ ZFS pool

- ✅ LVM-Thin

- ✅ Directory on a local SSD/HDD (not “wrong” at all, just less fancy)

- ✅ NFS (if your network + NAS are solid)

- ❌ SMB/CIFS over Wi-Fi (and honestly I’d avoid it even wired for VM disks)

Backups:

- ✅ NFS share to your NAS

- ✅ SMB share to NAS/another box

- ✅ Big local HDD mounted somewhere as a directory

ISOs & templates:

- ✅ Any directory storage (local or NAS)

- Whatever’s convenient and doesn’t fill up your VM disk space

Short version:

Fast/reliable storage for running VMs, cheap/big storage for backups.

4. Adding a New Disk in Proxmox (Realistic Example)

Let’s say you just shoved a new 2 TB HDD or SSD into your Proxmox box and you want to use it.

4.1 Find the disk

SSH into the node (or use the web shell) and run:

lsblk

You might see something like:

sda 100G # Proxmox boot / root

sdb 1863.0G # new 2 TB disk

We’ll assume /dev/sdb is the new disk.

4.2 Option A: Use It as Simple Directory Storage (Good for Backups)

If you just want a big place for backups, ISOs, etc., this is the straightforward option.

-

Partition & format it:

parted /dev/sdb -- mklabel gpt parted /dev/sdb -- mkpart primary ext4 0% 100% mkfs.ext4 /dev/sdb1(You can also do this with

fdiskif you prefer; I’m not religious about tools here.) -

Create a mountpoint:

mkdir -p /mnt/bigstorage -

Grab the UUID:

blkid /dev/sdb1Copy the

UUID="..."bit. -

Add it to

/etc/fstab:nano /etc/fstabAdd something like:

UUID=YOUR-UUID-HERE /mnt/bigstorage ext4 defaults 0 2Save, then:

mount -aIf there are no errors, you’re good.

-

Tell Proxmox about it:

- Go to Datacenter → Storage → Add → Directory

- ID:

bigdisk(or whatever name you like) - Directory:

/mnt/bigstorage - Content: tick what you want (e.g.

VZDump backup file,ISO image) - Node: your Proxmox node

- Hit Add

Now you have a big chunk of space Proxmox can target for backups or whatever.

4.3 Option B: Use It as ZFS Storage (Good for VMs)

If you want this disk to be a ZFS pool for VM disks:

⚠️ This will wipe the disk. Triple-check the device name first.

From the Proxmox web UI:

- Go to Node → Disks → ZFS → Create: ZFS

- Name the pool something like

tank - Select the disk (

sdb) - Choose RAID level (with one disk it’s just a basic pool, no redundancy)

- Click Create

Then add it as storage:

- Go to Datacenter → Storage → Add → ZFS

- ID:

zfs-vmstore(or similar) - ZFS Pool:

tank - Content:

Disk imageand maybeContainer - Node: your node

- Click Add

Now when you create or move VM disks, zfs-vmstore will show up as a target.

5. Adding Network Storage (NFS Example)

If you have a NAS (Synology, TrueNAS, Unraid, whatever), NFS + Proxmox is a nice combo.

On your NAS:

- Create an NFS share, e.g.

/volume1/proxmox - Allow your Proxmox node’s IP to access it (rw)

On Proxmox:

- Go to Datacenter → Storage → Add → NFS

- ID:

nas-backup - Server: IP of your NAS

- Export: pick the NFS export from the dropdown

- Content: usually

VZDump backup file,ISO image, maybeContainer template - Node: your node

- Click Add

Now you’ve got off-box storage for backups, which is already a big improvement over “everything on one disk.”

6. Moving an Existing VM Disk to New Storage

Once you’ve added new storage, you’ll probably want to move things around a bit.

- Go to your VM → Hardware

- Click the disk (e.g.

scsi0) - Click Disk Action → Move Storage

- Choose your new storage (

zfs-vmstore,bigdisk, whatever) - Tick Delete source if you want to free up the old space after the move

- Confirm

Proxmox handles the copying and updates the VM config. Depending on disk size and speed, it may take a bit.

7. A Simple Starting Layout That Won’t Bite You Later

If you’re just starting out and don’t feel like overthinking it, here’s a layout that’s simple and sane:

- Disk 1 (OS disk):

- Proxmox installed here

- Keep

localas a directory for:- ISO images

- Templates

- Maybe a couple test VMs

- Disk 2 (bigger / better disk):

- Either LVM-Thin or ZFS

- Add storage

vmstorefor:- VM disks

- Containers

- NAS (if you have one):

- NFS share

- Added as

nas-backupfor:- Backups

- Optional extra ISOs

That gives you:

- A clear separation between OS, VM storage, and backups

- A place to blow things up (test VMs) without risking your main stuff

- A story to tell yourself about “where things live,” which is honestly half the battle

8. Common “Oh No” Moments (So You Can Skip Them)

Some stuff I learned the slightly painful way:

- Everything on

local-lvmwith no backups

Works great until the disk fills up or dies. Then it’s not great. - ZFS on very low-RAM hardware

It works, but if you’re already tight on memory with VMs, you’ll feel it. - No separation between VM disks and backups

If the same physical disk holds your running VM and the backup of that VM, it’s better than nothing, but… not by much. - SMB for VM disks over anything flaky

Just don’t. Use NFS for this kind of thing if you can, and keep it wired.

9. Where to Go From Here

Once you’re comfortable with storage basics, nice next steps are:

- Setting up scheduled backups (and doing a test restore at least once)

- Looking into Proxmox Backup Server on another box or VM

- Using snapshots more intentionally (before big upgrades, etc.)

- Playing with ZFS snapshots and replication if you end up with more than one machine

But honestly, you don’t need to go deep to be “good enough” for a homelab:

- Know where your VM disks live

- Know where your backups live

- Try not to keep both on the same spinning rust disk

That’s basically Proxmox storage 101.